Google’s Gemma 4 Could Be Its Biggest Open Model Move Yet

Google has unveiled Gemma 4, a new family of open-weight AI models built from the same research line as Gemini 3. Stronger reasoning is the obvious selling point, but the more important shift may be the license. Google is moving Gemma 4 to Apache 2.0, giving developers a clearer and more familiar path to commercial use.

The family includes four models E2B, E4B, 26B A4B MoE, and 31B Dense. All four support text, image, and video input, while the smaller E2B and E4B models support audio too. While context windows are 128K on the smaller models anf 256K on the larger ones.

And Gemma 4 supports more than 140 languages, with 35-plus available out of the box.

Google is clearly trying to make Gemma useful across more than one type of machine. The smaller models are aimed at local and edge use, while the larger ones are built for heavier reasoning work on stronger hardware. In Google’s own memory estimates, the 4-bit versions start at about 3.2 GB for E2B and 5 GB for E4B. The larger 31B and 26B A4B models come in at roughly 17.4 GB and 15.6 GB. So this is not just a cloud story.

Google wants Gemma 4 running on phones, laptops, workstations, and servers.

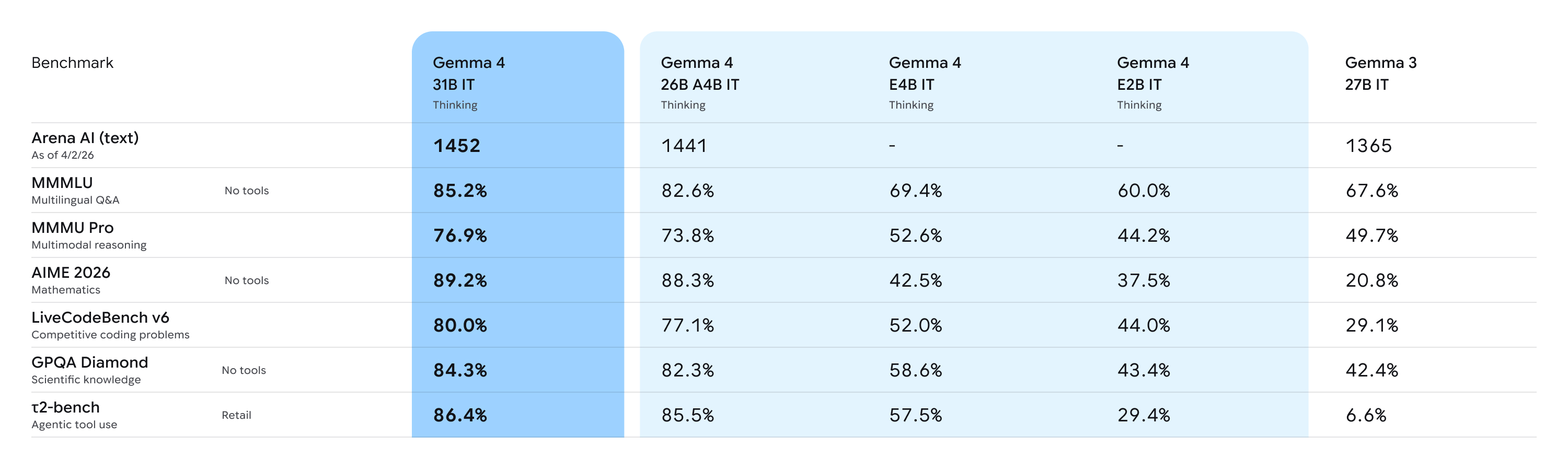

Google is leaning on the benchmark case as well. Its 31B Dense model scored 85.2 percent on MMLU-Pro, 89.2 percent on AIME 2026 without tools, and 80.0 percent on LiveCodeBench v6. The 26B A4B was lower across the same tests, at 82.6 percent, 88.3 percent, and 77.1 percent.

Also Read: Anthropic Accidentally Exposes Claude Code Source Code in npm Release

This is also rolling out quickly across Google’s stack. Google’s release notes say they are already available in AI Studio and through the Gemini API. On the cloud side, Gemma 4 is live in Vertex AI Model Garden. On Android, developers are getting E2B and E4B through the AICore Developer Preview as groundwork for the next generation of Gemini Nano 4.

Google says that Android-side setup can be up to 4x faster and use up to 60 percent less battery than the previous generation.

As the Gemma models have now passed 400 million downloads, with more than 100,000 variants created by developers. With Gemma 4, Google is pairing that momentum with better reasoning, broader deployment, and a license developers will have a much easier time saying yes to.

Y. Anush Reddy is a contributor to this blog.